Understanding Streaming Data Applications with Cloudflow

Stream processing is the discipline and related set of techniques used to extract information from unbounded data. Streaming applications apply such techniques to create applications and systems able to extract actionable insights from data as it arrives into our processing system.

The growing popularity of this technology is driven by the increasing availability of data from many sources and the need of enterprises to speed up their reaction time to that data.

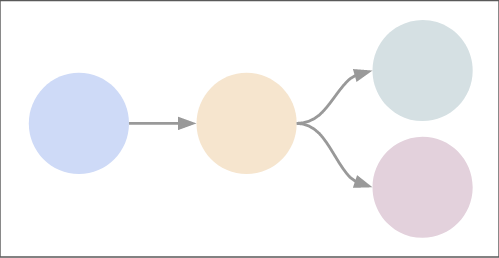

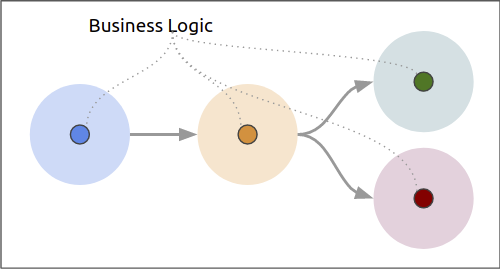

We characterize streaming applications as a connected graph of stream-processing components, where each component specializes on a particular task, using the 'right tool for the job' premise.

In An Abstract Streaming Application, we illustrate — generically — an application that processes data events.

At the left, we recognize an initial stage where we want to capture or accept data. It could be an HTTP endpoint to accept data from remote clients, a connection to a Kafka topic, or to an internal system in an enterprise. The following component represents a processing phase that applies some logic to the data, like business rules, statistical data analysis, or a machine learning model that implements the business aspect of the application. This component may add additional information to the event and send it as valid data to an external system or flag the data as invalid and report it. We show these two different data output paths with the green and red stages to the right.

Each of these stages presents different application requirements, scalability concerns, and usually, also different deployment strategies on Kubernetes.

The Cloudflow Approach to Creating Streaming Applications

Cloudflow offers a novel solution to approach the creation, deployment, and management of streaming applications on Kubernetes. For the developer, it offers a toolbox that facilitates the application creation. On the deployment side, it comes with a set of extensions that makes Cloudflow streaming applications native to Kubernetes.

Let us break down the typical develop-test-package-deploy lifecycle of an application and see how Cloudflow helps you at each stage to accelerate the end-to-end process.

Develop with Streamlets: Focus on the Code that Matters

When creating streaming applications, a large portion of the development effort goes into the mechanics of streaming, like configuring and setting up connections to the messaging system, de/ser/ializing messages, setting up fault-recovery storage, etc, to finally add our business logic to it.

Cloudflow introduces a new component model, called Streamlets. The Streamlet model offers an abstraction to:

-

Identify the connections of a component as inlets and outlets

-

Attach the schema of the data for each connection, and

-

Provide an entry point for the business logic of the component.

Cloudflow offers Streamlet backend implementations in Akka Streams, Apache Spark Structured Streaming, and Apache Flink.

For each supported Streamlet implementation, the users can write their business logic in the native API of the backend, using either Java or Scala.

|

Apache Spark Structured Streaming is currently offered only in Scala. |

Let us illustrate this important point with an example:

class MovingAverageSparklet extends SparkStreamlet { (1)

val in = AvroInlet[Data]("in")

val out = AvroOutlet[Agg]("out", _.src)

val shape = StreamletShape(in, out) (2)

override def createLogic() = new SparkStreamletLogic {

override def buildStreamingQueries = { (3)

val groupedData = readStream(in) (4)

.withColumn("ts", $"timestamp".cast(TimestampType))

.withWatermark("ts", "1 minutes")

.groupBy(window($"ts", "1 minute", "30 seconds"), $"src", $"gauge").agg(avg($"value") as "avg")

val query = groupedData.select($"src", $"gauge", $"avg" as "value").as[Agg]

writeStream(query, out, OutputMode.Append).toQueryExecution

}

}

}| 1 | SparkStreamlet is the base class that defines Streamlet for the Apache Spark backend |

| 2 | The StreamletShape defines the inlet(s) and outlet(s) of the Streamlet, each inlet/outlet is declared with its corresponding data type. |

| 3 | buildStreamletQueries is the entry point for the Streamlet logic |

| 4 | The code provided is written in pure Spark Structured Streaming code, minus the boilerplate to create sessions, connections, checkpoints, etc. |

Akka Streams and Flink-based Streamlets follow the same pattern.

Additionally, Cloudflow can be extended with new streaming backends.

Composition and Testing: Sandbox Execution of a Complete Application

Once we have developed the different components of our application, we compose the end-to-end flow by creating a blueprint.

The blueprint declares the Streamlet instances that belong to an application and how each Streamlet instance connects to the others.

The following example shows a simple blueprint definition:

blueprint exampleblueprint {

streamlets { (1)

ingress = sensors.SparkRandomGenDataIngress

process = sensors.MovingAverageSparklet

egress = sensors.SparkConsoleEgress

}

connections { (2)

ingress.out = [process.in]

process.out = [egress.in]

}

}| 1 | streamlets section: declares instances from the Streamlets available in the application (or its dependencies) |

| 2 | connections section: declares how the inlets/outlets of a streamlet should be connected. |

It is interesting to note that the declaration of instances in the streamlets section lets us use multiple instances of a Streamlet, with potentially different configurations, leading to component reuse.

With the blueprint file in place, we can verify whether our application is properly connected.

For that, we use the verifyBlueprint function, delivered by the sbt-cloudflow plugin.

$ sbt verifyBlueprint

[info] Loading settings for project global-plugins from plugins.sbt ...

[info] Loading global plugins from /home/maasg/.sbt/1.0/plugins

[info] Loading settings for project spark-sensors-build from cloudflow-plugins.sbt,plugins.sbt ...

[info] Loading project definition from cloudflow/examples/spark-sensors/project

[info] Loading settings for project sparkSensors from build.sbt ...

[info] Set current project to spark-sensors (in build file:cloudflow/examples/spark-sensors/)

[info] Streamlet 'sensors.MovingAverageSparklet' found

[info] Streamlet 'sensors.SparkConsoleEgress' found

[info] Streamlet 'sensors.SparkRandomGenDataIngress' found

[success] /cloudflow/examples/spark-sensors/src/main/blueprint/blueprint.conf verified.The blueprint verification checks that all the connections between Streamlets are compatible, using the schema information provided by the Streamlet.

Once the blueprint verification succeeds, we know that the components of our streaming application can talk to each other.

We are now ready to run the complete application.

Enter the Sandbox

Cloudflow comes with a local execution mode called Sandbox.

The Sandbox instantiates all Streamlets of an application’s blueprint with their connections in a single, local JVM.

We can see it in action in the following screencast.

The Sandbox provides you with a minimalistic operational version of the complete application.

You can use it to exercise the functionality of the application end-to-end and verify that it behaves as expected.

The Sandbox gives you a blazing fast feedback loop for the functionality you are developing, removing the need to have to go through the full package, deploy, and launch on a remote cluster.

Packaging: Build-generated Artifacts

Once we are confident that the application functions as we expect, we can build a package.

Cloudflow applications are packaged as a single docker image that contains the necessary dependencies to run the different Streamlets on their respective backends.

That image gets published to a docker repository of your choice.

Deployment: kubectl Extensions for a YAML-less experience

At this stage, we are ready to deploy our application to a Cloudflow-enabled Kubernetes cluster.

In contrast with the usual YAML-full experience that typical K8s deployments require, with Cloudflow we use the blueprint information and the Streamlet definitions to auto-generate an application deployment.

Cloudflow also comes with a kubectl plugin that augments the capabilities of your local kubectl installation to work with Cloudflow applications.

You use your usual kubectl commands to auth against your target cluster.

Then, with the kubectl cloudflow plugin we can deploy and manage a Cloudflow application as a single logical unit.

$ kubectl cloudflow deploy docker-registry/app-image:versionThis method is not only dev-friendly, but also compatible with the typical CI/CD deployments to allow you to take the application from dev to production in a controlled way.

Conclusion

As a developer, Cloudflow gives you a set of powerful tools to accelerate the application development process: - The Streamlet API, lets you focus on business value and use your knowledge of widely popular streaming runtimes, like Akka Streams, Apache Spark Structured Streaming, and Apache Flink to create full-fledged streaming applications. - The blueprint lets you easily compose your application with the peace of mind that a verification phase, informed by schema definitions, provides. - The Sandbox lets you exercise the complete application in seconds, giving you a real-time feedback loop to speed up the debugging and validation phases.

And with a fully developed application, the kubectl cloudflow plugin gives you the ability to deploy and control the lifecycle of your application on an enabled K8s cluster.

Cloudflow takes away the pain of creating and deploying distributed applications on Kubernetes, speeds up your development process, and gives you full control over the operational deployment.

In a nutshell, it gives you distributed application development super-powers on Kubernetes.